Latest Posts

Is possible Using Pigeons to carry 300TB SD Cards

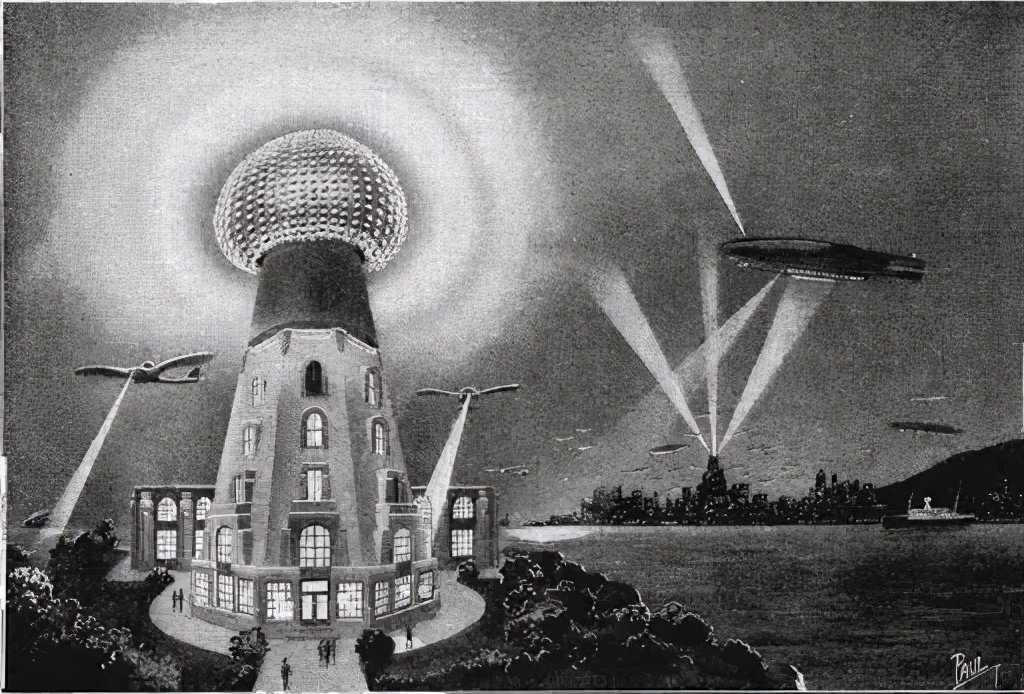

In 1901, Nikola Tesla has rushed around for raising money and built a giant iron tower called “Waldencliff Tower” near New York. This iron tower is nearly 60 meters high and its large iron head looks just like a mushroom, which may send huge lightning into the atmosphere time and again.

Schematic diagram of the working principle of the Wardencliff Tower

Becasuse Tesla has strictly kept experiments secret from outside, and since the tower shooting lightning looked quite weird, various strange rumors have spread: some held the opinion that this tower is calling aliens, while others said that this tower was a new type of charging device, something that can use electric arcs to run the surrounding electrical appliances.

In fact, the tower was not meant for those. According to Tesla, this tower was actually a communication device, similar to a radio station. Tesla believed that if the lightning energy were large enough, the signal could pass through the atmosphere and be received by electric towers with similar design in the distance.

Unluckily, Tesla’s project was put to an end before finishing, and the tower was demolished in 1917. For those who are keen on tube punk, this may be a huge regret. But from a realistic point of view, Tesla’s vision was doomed to fail at the very beginning. The reason is that it should be difficult to obtain the consent of ordinary people to build a high-voltage discharger near their homes which would be both dangerous and noisy, even if high-voltage lightning can really transmit signals over long distances.

But at that time, Tesla is too frenetic to understand. It could be extremely difficult for humans to transmit information remotely.

We are all familiar with the story of the marathon, which tells that the Greek coalition forces defeated the invading Persian army in the marathon war. The messenger, in order to convey the message of victory at home, ran more than 40 kilometers back to Athens, in which the moment he shouted “Victory” , he died and was in the dust forever.

In the two thousand and four hundred years since then, running messengers have gradually been replaced by horses and post stations, but in fact, if we want to spread a message to a distance, we still have to turn to the original form of human conversation. This was brought to an end when Bell invented the telephone in 1874 and since then human entered a new era.

For a long time, due to the special value of information, people have always pursued faster transmission speeds and more information, and they have tried hard to achieve their goals with various strange black technology. Some of these attempts were successful, while some failed. And Tesla and his super electric tower were just a section of the history of human communication.

Another crazy episode occurred in the 1960s. In order to win the support of the American people for the Apollo moon landing program, NASA came up with a wonderful idea: use the newly-invented TV at the time to broadcast astronaut’s actions on the moon..

Good as this idea sounds, it has a big problem. NASA has dedicated a decade to develop a highly integrated communication system for the Apollo program, but this system did not leave a place for TV broadcasting at the beginning.

After thorough consideration, NASA decided to squeeze out space for TV signals in the existing communication capacity. And the ranging function of Apollo 11 was thus canceled, and the communication mode was changed from a phase modulation mode more suitable for mobile devices to a frequency modulation mode similar to that of a radio station.

This adjustment greatly increased the odds of crashing in landing, but it did leaves a communication bandwidth of about 700khz, allowing the Apollo project astronauts to transmit 10 frames per second to the earth in real time. So that the whole could witness Armstrong step on the moon, a big step for human beings.

Nowadays, one can watch live video of strangers thousands of miles away when turn on phone. The Internet has become omnipotent and the days when it took many minutes to load a picture online are gone. However, this hardly mean that inventors who like to study black technology should give up their thought of inventing a faster way of communication.

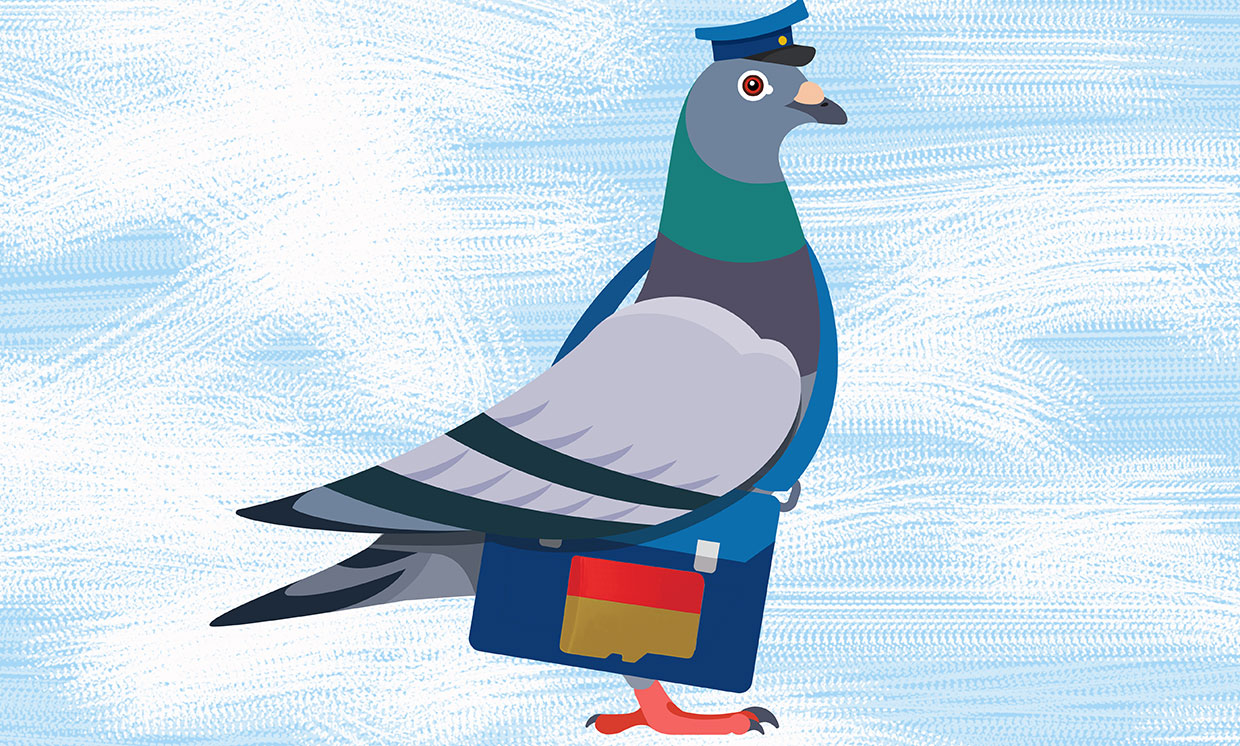

Last year, the American Society of Electrical and Electronic Engineers made a calculation: in the most ideal case, a pigeon can carry nearly 300 1TB Bulk SD cards and fly at 70-100 kilometers per hour. Based on this calculation, it will take only half a day for a pigeon to carry 300TB of data from Beijing to Shanghai; it is much quicker than the civil Internet of 200m, which need to cost more than 5 months to transfer the same amount of data.

Of course, fast as the pigeons are take bulk memory cards, they are obviously flawed. The Internet Engineering Task Force (IETF) specifying standards for the Internet, published an IPoAC standard proposal in 1990, whose full name is “Internet Protocol with Birds as Carriers”. To put it simply, it wants to standardize the way of communicating data packets through pigeons.

Experiments shows that, the packet loss rate of pigeons is around 55%, that means 5 of the 9 pigeons have not taken packets to the destination. In other words, although pigeons sending big data is efficient, it need to use a few more pigeons to ensure that the data can be delivered, which raises much costs.

Besides, such cloud service providers as Amazon and Google do have services for transporting data by truck, since many businesses need to be done in time, and the Internet alone could not meet their demand.

The reason for this phenomenon is that although the communication speed outside the system has become faster, the communication speed inside the system is actually faster. The network speed of hundreds of Mbps may seem to be very high compared to a dozen years ago, but in fact it is barely comparable to the data transmission speed of traditional mechanical hard drives.

The equipment that is really used to process and produce information also requires data communication. And their data throughput are totally different, and they need to cope with totally different problems.

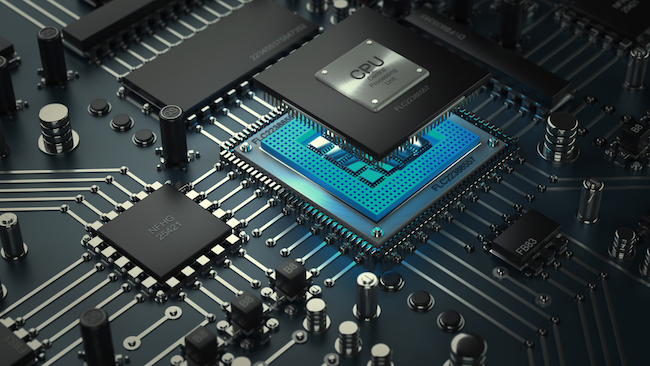

Modern CPUs process hundreds of GB of data in one second. And the physical distance between the memory and the CPU is only a few centimeters. However, if electrical signals travel at the speed of light between these few centimeters, there will be delays and even errors arising from external electrical interference. Such delays and errors seem small, but they are already enough to slow down the processor’s operating speed. To solve this problem, the CPU puts the cache directly on the chip to minimize the distance of signal transmission, and thus ensure the operating efficiency of the CPU.

The cache of modern CPUs has been etched together with the processor, and the area is even larger than the real computing unit

For ordinary people, a more intuitive experience may be that the mechanical hard drives in computers is becoming less useful. When upgrading an old computer, it is better to install the system on a faster solid-state hard disk than to change the CPU and increase the memory.

In fact, mechanical hard drives are not low and they are actually one of the fastest precision technologies that ordinary people can use. Like a 7200 rpm mechanical hard disk, the relative speed between the outermost circle of the platter and the magnetic head is up to 100km/h, and the magnetic head must accurately find a track less than 1 mm wide under this speed. Therefore the operating speed of mechanical hard drives has reached the physical limit.

And people are so particular about the communication speed that they have to turn to other forms of technology. Solid-state hard drives without no moving parts, conduct data storage entirely depending on electric potential. It ensures that it is more stable when running, and can also reach ultra-high speeds that mechanical hard drives cannot achieve because of own physical limitations.

The evolution of the efficiency of computers used to produce data, will finally result in the expansion of data. A decade ago, a 500GB hard drive seems to be enough for lifetime-use; nowadays, even a few terabytes of storage could be easily used up. A decade ago, most people went online for emails sending and news reading; today, full-time anchors push more than ten hours of live video to thousands of viewers every day. It is estimated by a survey in 2014 that the total amount of data on the entire Internet added up to 1 million EB (1 EB equals 1 million TB); now, 6 years passed and this figure may be enlarged by hundreds to thousands of times.

Human beings’ frequent use and production of information already makes it a formidable challenge to manage these data. Because servers emit heat when operating, 40% of the annual electricity in modern data centers is used for air conditioning. With the aim to cut energy consumption, Microsoft have installed the server in a nitrogen-sealed computer room and sunk to the bottom of the sea, applying sea water to cool the computer room. Therefore the data center can run on wind and solar energy theoretically, which saves electricity bills and cuts carbon emissions of the data center.

Leave a comment